This week, I enacted a lesson in Dual Credit Precalculus using The Devil and Daniel Webster task. All this year, I’ve been following as best I can the recommendations from Peter Liljedahl’s Building Thinking Classrooms in Mathematics (BTC) book. I thought that this task was particularly rich, and an example of how well students can persist on a challenging task at this point in the year, when they’re used to thinking deeply about mathematics.

I first came across this task as an example in 5 Practices for Orchestrating Productive Mathematics Discussions (2nd ed. p. 99). In the book, they describe a lesson plan, and there are lesson plans available from the NCTM Illuminations site as well. I also located an article by Maurice Burke which extends the activity by employing a Computer Algebra System (CAS) inside a spreadsheet on the TI-Nspire, which I’d never seen done before.

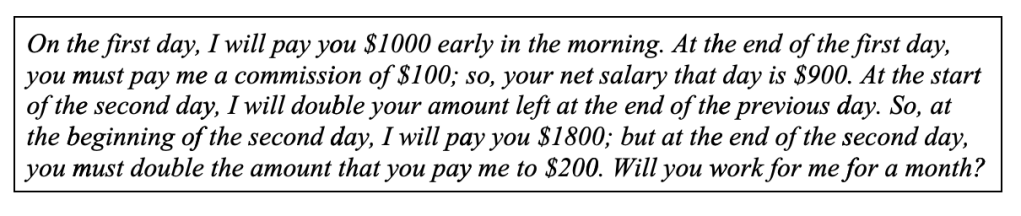

I decided not to present the students with any written form of the task, since the recommendations from BTC is to present verbally. I also didn’t provide the specific questions, or a pre-printed table. I simply told the story, first explaining the upside, and then doing a “Oh, but I forgot” when it comes to the commission. This was a great hook, and the students chimed in with comments about not ever trusting a deal with the devil, and how it sounds too good to be true.

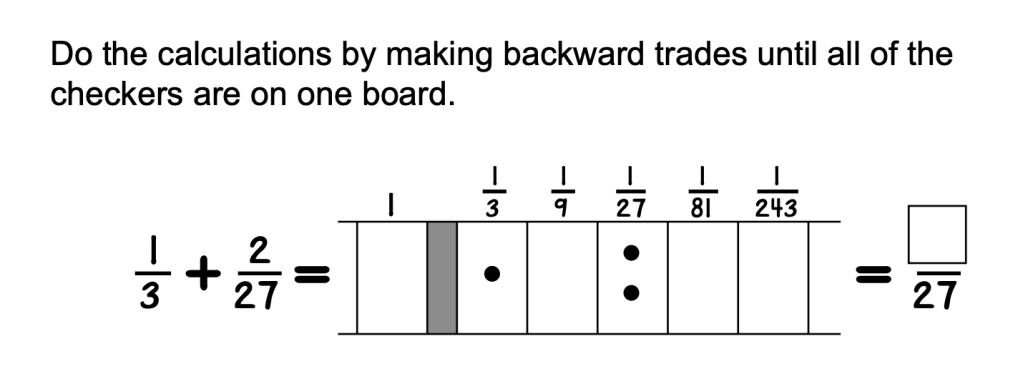

The first thing that needs clarification is that the written task above in my opinion does not help distinguish between the amount you have and the amount you’re being paid. Other published versions make this more clear. The intended problem is that the devil doubles the amount left at the end of the previous day, and that’s your current balance. When the written task says “I will pay you $1800,” it seems to suggest you get 1800 for that day, in addition to the 900 you received on day 1. However, based on the answers and all other discussions of this problem, that is not the intended interpretation. What I found doing this problem with students is that many of them tried to double the amount for the beginning of the previous day. So for example, getting $2000 for Day 2. I anticipated this confusion, and clarified it immediately because changing that set up is a different problem entirely, with different mathematical structure.

Groups (which were formed in a visibly random fashion they are used to) immediately went to their whiteboards (Vertical Non-Permanent Surfaces in the parlance of BTC), and started making tables “by hand” i.e. using a calculator but going day by day through the problem. Most of them created two or three columns of values in addition to the day number. Eventually, all groups concluded that it was not a good deal, and that Daniel Webster goes broke after 10 days.

The really interesting phase of the lesson began when I started to push them to vary the initial values, and ask questions to advance their thinking to a more abstract model of the scenario. I prompted some problem solving avenues such as trying to model particular columns or using tools like spreadsheets or CAS.

I was monitoring for student thinking by using an anticipation guide I prepared according to the 5 Practices framework. In the two classes, a variety of anticipated and unanticipated strategies emerged. I used the progression from Burke about spreadsheets to guide students to create formulas so that their spreadsheets would dynamically change when initial values are altered. One group conjectured a relationship between the initial payment and the day Daniel Webster goes broke. I anticipated that groups would try to write the exponential function for the commission, which several did. I also showed one student a strategy suggested in the lesson plan, where you suspend evaluation at each stage, leaving the expressions in terms of powers of 2, in order to notice a pattern. I had to go through a few stages as examples before the student was able to continue, but it was an interesting direction to attack the closed form.

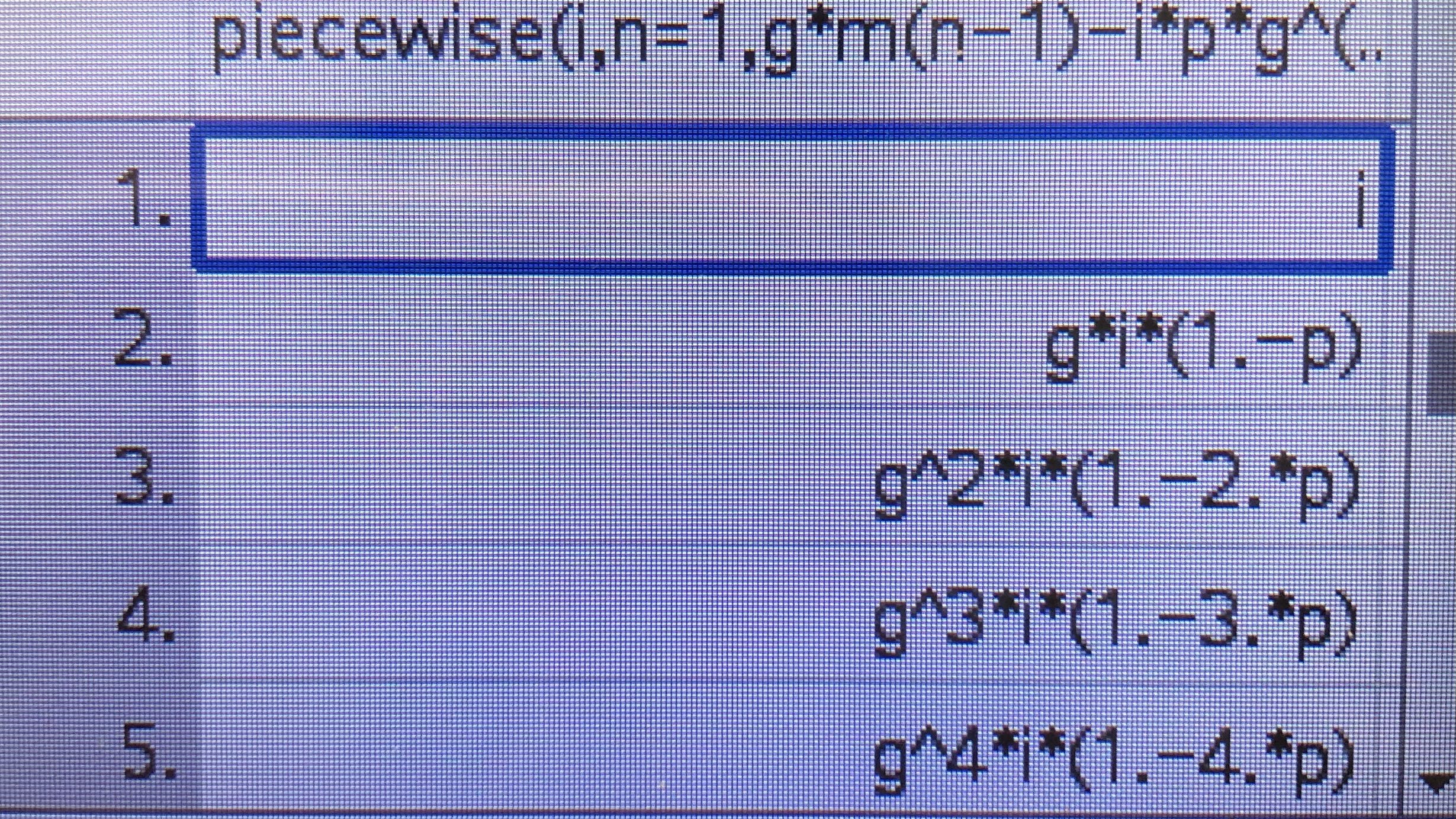

Several groups pursued strategies I did not anticipate. For example, one student had a feeling that because doubling was central to the problem, the closed form would be some variation on 2x. They tried many different equations, using Desmos to quickly check if the graph and table matched their computed values. I tried to advance their thinking by helping them see that we needed part of the function to grow faster than 2x , but guessing that x2x should be involved was a bit of a stretch I think. Another group was trying to express a recursive idea, so I helped them clean up their notation for a recursive formula and then model it on the TI-Nspire CAS. It is necessary to use the closed form for the fee or commission in order to write a simple recursive formula for the money at the end of the day.

Something I didn’t think about ahead of time, because it wasn’t in any of the articles, was that if you take a recursive definition and parametrize it by replacing the given information by variables, the TI-Nspire is capable of making an abstract table directly from that definition.

This is similar to the approach taken by Burke, but it approaches the function from algebraic notation instead of coupled columns in a spreadsheet. I also found you don’t need “expand” for the calculator to give a form that is a recognizable pattern.

After the class was over, I considered connections to later topics in math. I realized that the recurrence relation we are studying is analogous to a non-homogenous first order linear ODE. After I made this connection, it made more sense why this recurrence does not immediately lead to an obvious closed form, and why you need some more heavier machinery to attack it. I’m not very familiar with the theory of non-homogeneous linear recurrence relations, but I’m assuming there are analytic solutions in the discrete case analogous to the continuous version, probably based on characteristic polynomials. The recurrence itself is not too complicated, but guessing that the solution should have an exponential term and a term like is highly unintuitive without having studied difference or differential equations.

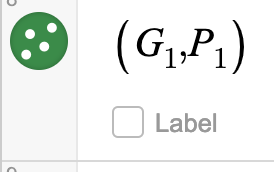

I also created a Desmos graph where you can vary the parameters for the first day’s salary and commission and see the effect on the graph and table of values.

After I completed the lessons, I added the strategies that I did not anticipate in this first enactment to my anticipation guide. I will use that guide the next time I use this task. For anyone that is keen to teach this lesson, here is the complete anticipation guide

My overall thoughts after finishing this challenging and rich task is that it showed me a version of what I hoped for when I started this journey with Building Thinking Classrooms. I wanted to create an environment where students in a neighborhood public high school could engage in high level, authentic mathematical thinking. I’m excited for the rest of this school year as we continue to do more of these tasks, and hopefully reap the benefits of a Thinking Classroom. There’s a lot to learn about other parts of the framework, especially about getting the collective synergy of this classroom to translate consistently to individual achievement and understanding, but I am very pleased with the persistence of problem solving evident in this enactment of The Devil and Daniel Webster.